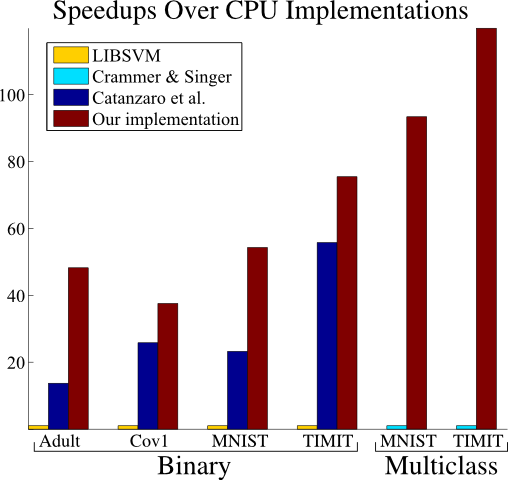

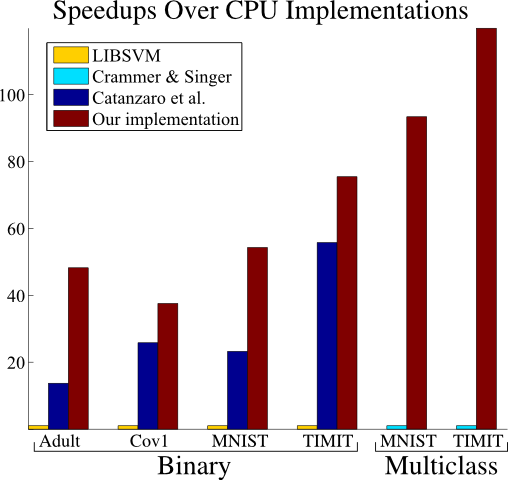

This is an implementation of an algorithm for efficiently training a binary or multiclass kernelized Support Vector Machine (SVM) on a Graphics Processing Unit (GPU). Our approach is distinguished from earlier work in that it cleanly and efficiently handles sparse datasets, and is also specifically designed to take maximal advantage of the graphics hardware. This leads to different algorithmic choices then those preferred in serial implementations, whereas previous GPU implementations have tended to be straightforward parallelizations of successful serial algorithms. Our library is orders of magnitude faster then existing CPU libraries, and up to several times faster than prior GPU approaches. We have made every effort to ensure that this library is not only efficient, but also very easy-to-use.

The implementation is in C/C++, except for a small Matlab script which wraps the MEX interface. Nearly all development and testing was done under Linux (64-bit Ubuntu), although experimental Windows support has recently been added. In order to compile the C library interface and command-line programs, you'll require the boost libraries, as well as CUDA. For the Matlab interface, of course you'll need Matlab.

GTSVM runs very slowly on some newer NVIDIA cards. I don't know the reason for this (if you do, please let me know). Before using GTSVM, you should perform a simple performance comparison with, say, LIBSVM, to check if you are affected.

All source code is copyrighted, and licensed under the GPLv3.

TAR.GZ ZIP Source code gtsvm_src.tgz (65K) gtsvm_src.zip (107K)

If you want to follow along with the usage section (below), you'll need a copy of the Adult dataset. Other datasets might be useful for experimenting with the library's features.

TAR.GZ ZIP Adult dataset adult.tgz (1.1M) adult.zip (1.1M) Forest covertype-1 dataset cov1.tgz (18M) cov1.zip (18M) MNIST dataset mnist.tgz (25M) mnist.zip (25M) TIMIT dataset timit.tgz (24M) timit.zip (24M)

Adult and covertype-1 are both binary datasets. The multiclass MNIST and TIMIT datasets are included to permit you to experiment with multiclass classification. The adult archives contain .dat files (in SVM-Light format) in addition to .mat files, but the rest only include .mat files.

The adult and MNIST datasets were downloaded from Léon Bottou's LaSVM web page. The covertype-1 dataset is originally due to Jock Blackard and Denis Dean, but was received from Thorsten Joachims by way of Shai Shalev-Shwartz. This processed version of the well-known TIMIT speech corpus was created by, and provided by, Joseph Keshet.

When you untar (or unzip) the archive file, four directories will be created: "lib", "bin", "mex" and "VS2010". The first three of these contain the source code for the C library interface, command-line interface and Matlab interface, respectively. The fourth, "VS2010", contains a Visual Studio solution (created in VS Express 2010) which builds the library and command-line interface.

The upper-level directory contains a Makefile, which you will probably need to modify. The "SUBDIRS" variable lists the subdirectories to build. You'll always want to build both the C library ("lib") and the command-line programs ("bin"), but if you don't have Matlab installed, or don't want to build the Matlab interface for any other reason, you should remove "mex" from this list. The "PLATFORM_CPU" variable determines whether to use SSE2 instructions--you probably do want to use these instructions, so keep this variable set to "sse2". If the "PLATFORM_GPU" variable is empty, then the CUDA code will target compute capability 1.0. If you have a card which supports compute capability 1.3 or later, then you might want set this variable to "sm_13" (it should make little difference). The final important variable is "BITS", which should be either "32" or "64"--if you're building the Matlab interface, be sure to use "BITS=32" for 32-bit Matlab, and "BITS=64" for 64-bit Matlab. Once you're done editing the Makefile, simply run "make" in the upper-level directory.

The C library interface will be contained in the files "lib/libgtsvm.a", which you should copy into a library directory (say "/usr/local/lib") and "lib/gtsvm.h", which you should copy into an include directory (say "/usr/local/include"). The command-line programs "bin/gtsvm_initialize", "bin/gtsvm_optimize", "bin/gtsvm_shrink", "bin/gtsvm_classify", "bin/gtsvm_recalculate" and "bin/gtsvm_restart" should be copied into a directory in your path (say "/usr/local/bin"). Finally, the Matlab interface will be contained in the files "mex/gtsvm_mex.mexa64" ("mex/gtsvm_mex.mexglx" if you built with BITS=32 in the Makefile) and "mex/gtsvm.m", both of which should be copied into a directory in your Matlab path.

A disclaimer: GTSVM was developed entirely under Linux, and has only been tested in the most cursory fashion under Windows.

To begin with, make sure that you've installed the boost libraries and CUDA development tools. For boost, I used the boostpro installer, selecting the "Multithreaded" and "Multithreaded Debug" libraries. However you choose to install boost, you must set the "BOOST_ROOT" environment variable to the root directory of your boost installation (for me, this was "C:\Program Files\boost\boost_1_47")--this enables the VS projects to locate boost.

Once this is done, open up "VS2010\VS2010.sln" in Visual Studio, and build everything. This will create the library "gtsvm.lib", as well as executables for all of the command-line programs, placing these files in either "VS2010\Release" or "VS2010\Debug" (depending on the configuration).

The Matlab interface is not built within Visual Studio. Instead, you should open up Matlab, change to the "mex" subdirectory of wherever you placed GTSVM, and run either "windows_make_release.m" or "windows_make_debug.m". This will create "gtsvm_mex.mexw32", the mex-file needed by the matlab interface in "gtsvm.m".

All of the command-line programs accept the "-h" option, which will display (hopefully fairly useful) help, describing all of the options, and how they should be used:

> gtsvm_initialize -h

In this, and all other example command-lines, the ">" character represents the prompt, with the remainder of the text being the command you should type. You may also view the help for each program by following these links: gtsvm_initialize, gtsvm_optimize, gtsvm_shrink, gtsvm_classify, gtsvm_recalculate and gtsvm_restart.

For the remainder of this section, I'll go through an example of how to use these programs. Download the adult dataset from the downloads section above, before proceeding further. The archive should contain the files "adult_training.dat" and "adult_testing.dat", both of which are in SVM-Light format.

We'll start by creating a model file from this dataset:

> gtsvm_initialize -f adult_training.dat -o adult.mdl -C 1 -k gaussian -1 0.05

The "-f" option specifies the dataset file, and "-o" the model file to create. The regularization parameter is given by the "-C" parameter (in the C-SVM formulation), while the "-k gaussian" and "-1 0.05" parameters tell the program to use a Gaussian kernel, with gamma (the first and only parameter to the Gaussian kernel) equal to 0.05. The resulting model file is initialized to the zero classifier--optimization is a separate process from model file creation.

The next step is to optimize the SVM problem:

> gtsvm_optimize -i adult.mdl -o adult.mdl -e 0.01 -n 1000000

Here, both the input ("-i") and output ("-o") parameters are the same model file, which will be overwritten once optimization completes. The "-e" parameter gives the termination criterion, in terms of the normalized duality gap--it will be satisfied once 2(p-d)/(p+d)<epsilon, where p is the value of the primal objective, and d the dual. The "-n" parameter gives the maximum number of iterations to perform--generally, this should be set to some absurdly high number, so that it is the epsilon termination criterion which is ultimately satisfied.

Because a model file is essentially a snapshot of the current optimization state, it contains some irrelevant information, once optimization has been completed. In particular, it contains all of the training vectors, even those which have associated dual variables which are equal to zero. This causes an optimized model file to be overlarge, and also can hurt classification performance. For this reason, we will use the gtsvm_shrink program to remove all irrelevant training examples:

> gtsvm_shrink -i adult.mdl -o adult_shrunk.mdl

This accomplished, we may classify the testing dataset:

> gtsvm_classify -f adult_testing.dat -i adult_shrunk.mdl -o adult_testing.txt

The dataset to classify, given by the "-f" parameter, is once again assumed to be in SVM-Light format (which means that it must contain label fields, although these will be ignored). The output file will be in text format, containing one floating point number per line, equal to the value of the classification function on the corresponding testing example. The sign of this number is the classification.

Two programs have not been mentioned: gtsvm_recalculate and gtsvm_restart. During optimization, certain running sums are kept, in which, over time, numerical errors may accumulate. The gtsvm_recalculate program simply recalculates all of these sums from scratch. In practice, we have not found its use to be necessary. The second program, gtsvm_restart, is essentially a replacement for gtsvm_initialize, in the case in which you want to create a new model file from the dataset contained in an existing model file, rather than a SVM-Light dataset file.

It might be helpful to take a look at the Matlab interface to the underlying mex file, gtsvm.m, before reading this section.

The "sample dataset" archives in the downloads section contain the file "adult.mat", which contains the training set in the "training_vectors" and "training_labels" variables, and the testing set in the "testing_vectors" and "testing_labels" variables. Start Matlab, and load this file:

> load( 'adult.mat' );

The first step in using the Matlab interface is to create a "context", which will contain all of the state associated with the current optimization problem:

> context = gtsvm;

We will next initialize the context, by telling it learn a binary classifier (the "false" parameter) based on training_vectors and training_labels, with regularization parameter C=1, a Gaussian kernel with gamma=0.05, and an unregularized bias (the "true" parameter):

> context.initialize( training_vectors, training_labels, false, 1, 'gaussian', 0.05, 0, 0, true );

Kernels can take up to three parameters, all of which must be specified. Since the Gaussian kernel only takes one parameter (gamma), we give zero as the values of the other two.

We now optimize the problem, with, as in the command-line case, epsilon=0.01 and n=1000000. We'll then classify the testing dataset, placing the result into the "classifications" variable. Finally, we calculate and display the testing classification error (about 15%):

> context.optimize( 0.01, 1000000 ); > classifications = context.classify( testing_vectors ); > sum( ( classifications > 0 ) ~= testing_labels ) / length( testing_labels )

if we want to save this context to a model file:

> context.save( 'adult.mdl' );

Let's try the same thing, with a degree-3 polynomial kernel. This kernel takes three parameters, and has the form K(x,y)=(p1*<x,y>+p2)p3. The "restart" function simply re-initializes the context to the zero classifier, using the same training dataset but different values of the parameters. The fact that we're using "restart" here is the reason that we didn't call "shrink" after the above example: we want all of the training vectors to be present in the restarted context. We'll now use C=0.5, and will take K(x,y)=(0.05*<x,y>+1)3, with no unregularized bias (the "false" parameter):

> context.restart( 0.5, 'polynomial', 0.05, 1, 3, false ); > context.optimize( 0.01, 1000000 ); > context.shrink; > classifications = context.classify( testing_vectors ); > sum( ( classifications > 0 ) ~= testing_labels ) / length( testing_labels )

This should again give roughly 15% error. To save memory, we may now destroy the context:

> clear context;

If we want to keep the context around, but free the memory which it has allocated for the training dataset (this has the effect of resetting it to the empty context):

> context.deinitialize;

If we only want to free graphics card's memory, but want to keep the training dataset on the host machine (this preserves the context's state--memory will be re-allocated on the graphics card as soon as it is needed):

> context.deinitialize_device;

I'm not going to give a detailed description of the C library interface, although if you've gone through the usage of the command-line and Matlab interfaces, then most of the functions will be familiar. You might want to look at the header file gtsvm.h, and read through the source code for the command-line programs (in the "bin" directory), all of which are pretty simple.

The interface is likely to look "weird" to a C programmer--in large part, this was done in order to make it easier to interface with Matlab. For example, vectors and matrices are passed as void pointers, with an additional enumeration parameter specifying the type. Similarly, functions which accept matrices also take the flag "columnMajor", which indicates whether the matrices are stored in column-major (as opposed to row-major, the default in C) format.